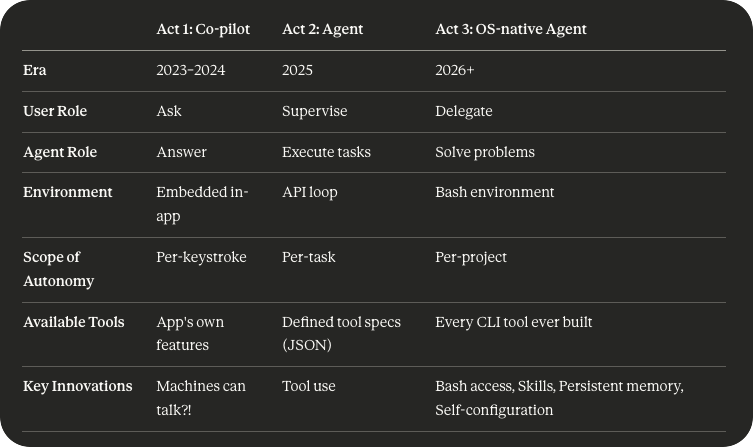

AI's third and final act

Ghost in the Shell

AI’s third act started sometime in the middle of December 2025.

Strangely, it didn’t happen until a few weeks after the real trigger point (the launch of Opus 4.51). It took some time to really understand the magnitude of how smart models had become.

But once we saw the capabilities, things sped up. Developers spent the holiday season feverishly building with Claude Code. Mostly Claude Code plugins, in fact. We built better while loops. agent-managed context. multi-agent. iMessage gateways.

The shape of AI’s third act has emerged. You still have to squint a bit to see it. But it looks powerful.

Act 1: Tab tab tab

Act 1 was the Copilot era. Context windows were small and RAG was big. Every AI startup was working hard on prompt engineering to show they weren’t just a wrapper.

The end result was a world of chat sidebars that sat inside a legacy app, but completely separate from it. Cheerfully offering to answer onboarding questions while the user kept navigating their Hubspot.

Act 2: Finally counting r’s

Act 2 was the Agent2 era. While models learned to reason, we realized for loops work. The AI could drive short errands on a leash, with a small set of tools. AI engineers were obsessed with making those tools complete and reliable.

There was a glimpse of real utility. Coding agents were actually speeding up workflows. Every influencer thumbnail was a giant n8n graph. Image and video gen really started to work.

But: our collective hesitancy to take the models off the leash held back their full potential.

Act 3: Superintelligence is a lobster?

It’s tempting to say the difference between Acts 2 and 3 is “better models.” But the real shift is the interface. We’ve gone from a JSON spec to a full computer. It’s like going from a gardening shovel to a backhoe.

And that’s Act. 3. We put a smart model in a sandboxed environment with full bash access. Add some context (more importantly, context on how to find more context). Give it the ability to talk with itself after it exhausts its context window. And put it in an aggressive For loop that doesn’t stop until it’s completed its mission.

Who would have guessed that bash was the gateway that brings superintelligent coding agents to general purpose work?

But it makes perfect sense: when the agent has an OS, every CLI tool ever written becomes a capability. And utility of an agent increases exponentially with the number of interfaces.

The first-order effects are clear. Within months, our work will look a lot more like:

“Hey Claude, could you pull the todos from my meeting transcript with Paul, add mine to my todoist, book a follow-up, collect Ashley’s comments the plan, and start drafting the slides we talked about?”

But the second-order effects are more interesting. The era of ‘AI is a chatbot’ is dying. OS-native architecture unlocks heartbeats, always-on agents, and AI feeling a lot more like an active entity than answer machine.

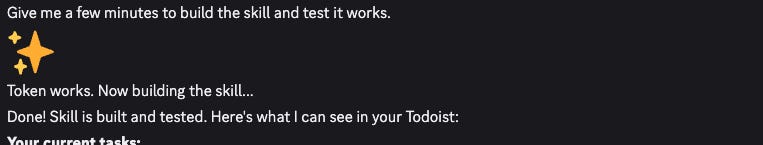

And the third-order effects get weird. Now, an agent also has the ability to self-configure. My single biggest ‘feel the AGI’ moment happened when I asked my Moltbot OpenClaw if it had a todoist integration. It didn’t, but then it proceeded to cheerfully build one on the spot.

There’s something embarrassingly (bitterly?) simple about Act 3.

Just Give It Bash.

The sophistication is in the lack of scaffolding. And the shape of the future is emerging.

So why won’t there be an Act 4?

Make no mistake: there’s plenty of room at the top. Interfaces and models will get much better in several ways:

Better memory (especially active learning)

Solving the necessary security and UI issues to bring Act 3 to the mainstream

Agent swarms. Or some new layer of abstraction on top of monitoring several agents by hand

Completing the move from ‘reactive’ to ‘proactive’ (smart cron)

Better browser/GUI navigation

But (except for maybe the 3rd one) none of these look fundamentally different than “bash commands in a while loop.” And all of these are at least partially implemented today. Claude Code already uses subagents extensively and launched agent teams in preview last week. Some architectures already have a heartbeat.

So the remaining frontier isn't architectural. There simply aren’t many architectural limitations left to remove at this point.

The frontier is about trust and security. The real question isn’t now isn’t if an agent can do all these tasks. It’s whether it can do it securely. These are two of the most interesting areas for startups right now.

All of the smart people I know who follow this closely seem to believe that 2026 will be the year that things get weird. The shape of these agents might actually be the endgame board.

Why was Opus 4.5 so much better? You could point to better instruction following, coding ability, etc. But I think the biggest thing is error recovery and long-term task coherence.

It’s a real shame we wasted the term ‘Agents’ on Act 2, because it really is a better name for what’s happening now.